How to Think About AI for Your Fund

A framework for PE firms navigating the AI tool and vendor explosion

Right now, there are more AI tools going after private equity right now than anyone can keep track of, and most demos look identical. Everybody promises the same thing: faster diligence, smarter deal flow, better portfolio monitoring. It’s hard to know what to believe.

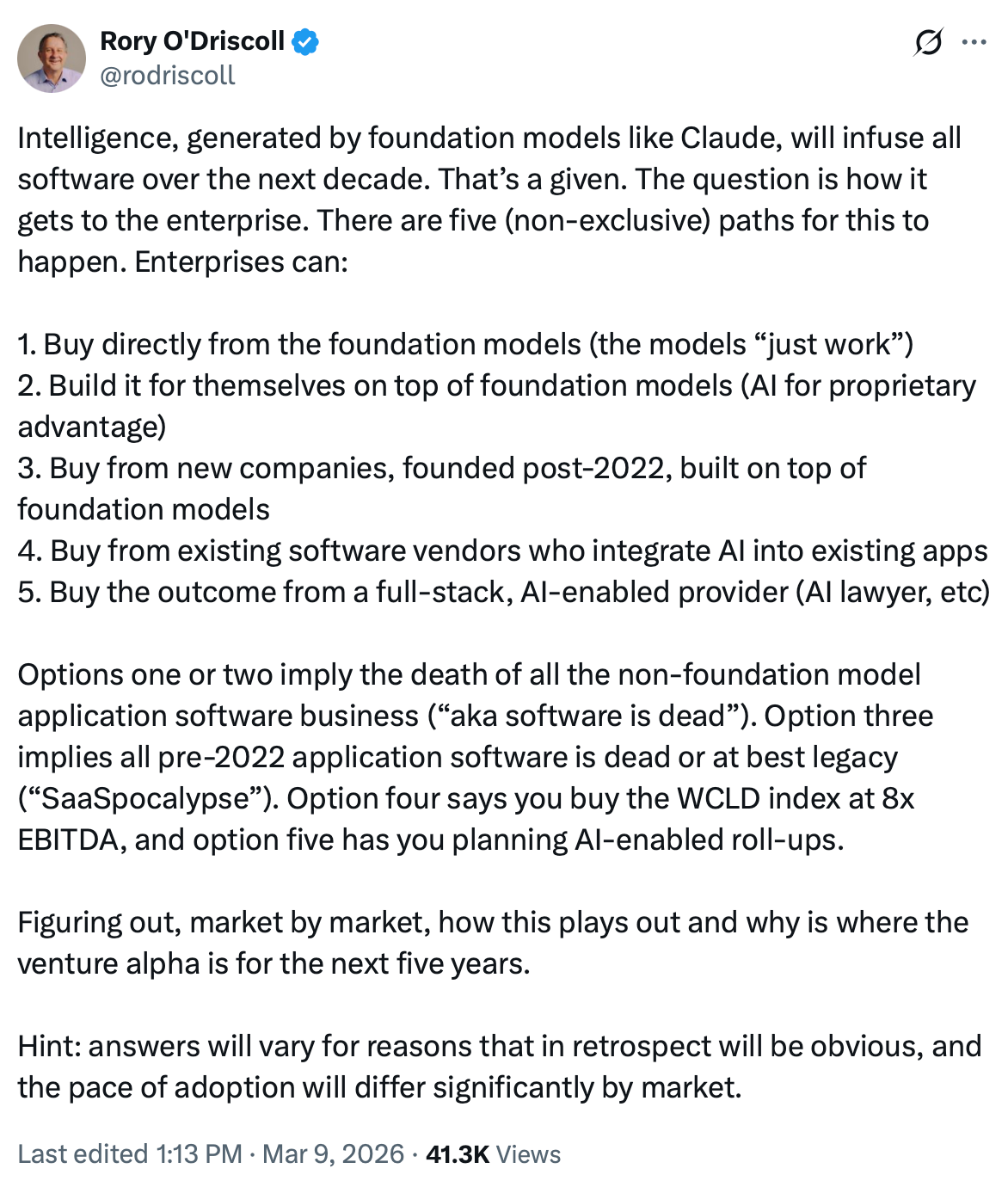

The single most important thing to know is that the companies building the underlying AI are moving up the stack and building the vertical tools themselves. Anthropic rolled out plugins for Excel and Powerpoint. ChatGPT 5.4 is highlighting their multi-format file generation and focus on professional services. Just in the past week there’s news that Anthropic is partnering with Blackstone and other PE firms to form an AI consulting venture. And two days ago you had news leaking that OpenAI is looking to do the same with TPG, Advent, Bain, and Brookfield Asset Management.

This means the application you’re paying for today is likely just a feature in the next Claude release. And it means the firms that come out ahead kept their data portable, stayed flexible on tooling, and built workflows around how they actually work.

From that starting point, three principles should guide how you approach AI for your fund — on software contracts, on data, and on implementation.

Avoid long-term contracts

Whatever tool you buy today will look fundamentally different in six months. A few months ago you paid for a separate tool to generate PowerPoint slides “using AI.” That’s now handled natively inside Claude, which carries context not just from every conversation you’ve had but also integrates with the tools you already use. The experience of doing the work inside a rapidly improving foundation model is simply better than stitching together a set of disparate tools that may or may not keep up.

Even if you’re skeptical of that trajectory, the pace of change alone should give you pause. Software engineering is the canary in the coal mine. Ask any software engineer which AI tools they use for coding and you’ll get a different answer from everyone — and a different answer from the same person six months later. Our own team has cycled through Codex, Claude, and Cursor over the past year. Preferences shift, models leapfrog each other, and the landscape reshuffles faster than any contract should assume.

Signing a long-term deal with any AI vendor right now means betting on their roadmap, which is a dangerous game to play.

Own your data

Most of these tools are a polished interface on top of the same AI models you already have access to. The cost of building custom software has collapsed — we worked with one fund that went through a full demo process with every major AI-for-PE platform and then decided to build in-house. The number of platforms competing for your attention is itself a signal of how commoditized the interface layer has become.

What isn’t commoditized is your data and your process. Your data should stay yours — made actionable by layering the right tools on top of it, not deposited into a vendor’s database where it gets structured around their choices and locked into their interface. How often have you wished for a product to act a certain way — then got frustrated when you just couldn’t get it to do a seemingly simple thing? A single vendor owning your data means you inherit their constraints every time you want to do something new with it.

The most sophisticated customers we work with have internalized this. They’re moving away from vendor UIs and toward direct API and MCP access — building lightweight interfaces around their own data rather than the other way around. The work happens inside foundation model chat apps, integrated directly with their data. The UI is almost an afterthought.

Build for your process, not theirs

Generic platforms give every fund the same experience. Your firm’s edge is in the specifics: your thesis criteria, workflows, and decision process — in other words, your institutional memory that currently lives in historical files and team member’s heads. Off-the-shelf tools don’t capture that, and without it they don’t get adopted.

The firms doing this well are rethinking how they operate, identifying where AI actually accelerates their process, and deliberately building those workflows. Concretely, that looks like:

Automatically drafting first pass versions of memos and one pagers: As new CIMs arrive, their system extracts key data and generates one pagers designed around the way they think and presented in the layout they’ve spent decades perfecting. This data is also stored in a structured way, giving them the ability to compare against similar deals and call out any anomalies.

Ingesting data room documents and automatically categorizing and summarizing each one: The results of this analysis reduce the friction for everyone on the team, since everyone can see what’s available and speed up the diligence process.

Kicking off deep research tasks based on specific triggers: For example, pulling in the background and experience and doing a litigation scan for the executive team.

The ideal solution is a best of breed integration. The underlying capabilities move so quickly that you need to design your system to be flexible and focus on the parts that will not change - the data and the workflows - and then layer on evolving improvements

How to evaluate what’s in front of you

Given all of this, comparing features across platforms is the wrong approach. Any third party product will end up being too generic for your firm’s specific MO. Given that, the right questions to ask are:

Can you take your data with you? More specifically, can you export everything the tool has analyzed? Can you access the structured data through an API or MCP? If the vendor disappeared tomorrow, would you still have your intelligence?

Does it bend to you, or do you bend to it? Can the tool be configured to match how your firm actually works — your IC format, your processes, your thesis criteria — or are you reshaping your process to fit their product?

Can you swap out the AI model when something better ships? Are you locked into one model, or can the system be pointed at whatever performs best for a given task?

The firms that answer those questions well will have built something that keeps working regardless of which tool wins.